They’re hindering Americans’ return to Earth, too, actually

They’re hindering Americans’ return to Earth, too, actually

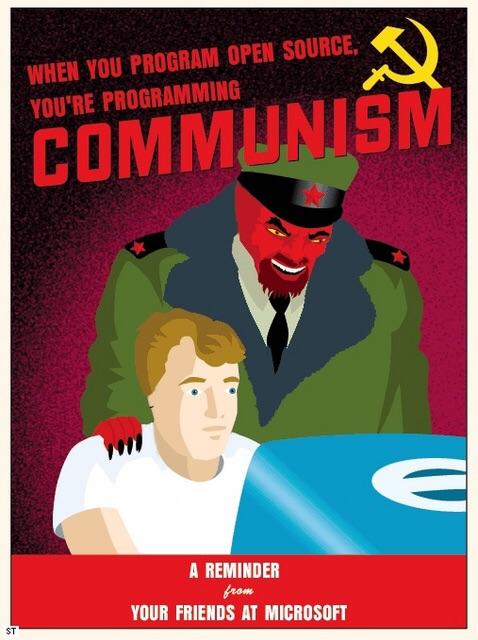

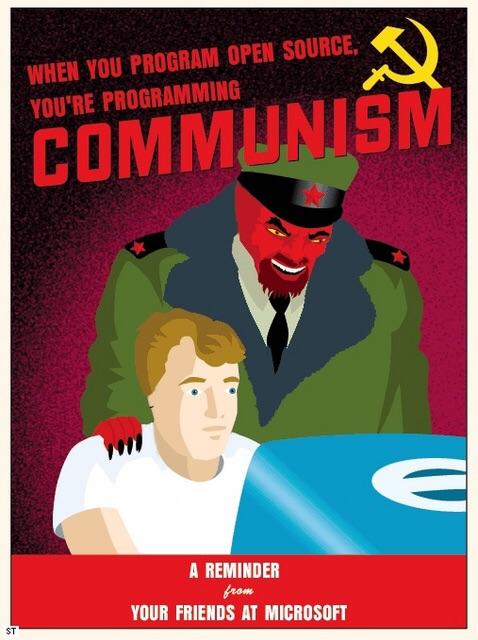

Well, yeah, but perhaps that implies they don’t know who the actual communists are, so they will still target the “commie” demonrats

AI/ML research has long been notorious for choosing bullshit benchmarks that make your approach look good, and then nobody ever uses it because it’s not actually that good in practice.

It’s totally possible that there will be legitimate NLP use-cases where this approach makes sense, but that is almost entirely separate from the current LLM craze. Also, transformer-based LLMs pretty much entirely supplanted recurrent networks as early as like 2018 in basically every NLP task. So even if the semiconductor industry massively reoriented to producing chips that support “MatMul-free” models like this one to even get an energy reduction, that would still mean that the model outputs would be even more garbage than they already are.

I’m highly skeptical of this at first glance. Replacing self-attention with gated recurrent units seems like a decisive step back in natural language processing capabilities. The advancement that gave rise to LLMs in the first place was when people realized that building networks out of a bunch of self-attention blocks instead of recurrent units like GRU or LSTM was extremely effective.

In short, they are proposing an older type of model which are generally outclassed by attention-based transformers that power all the LLMs we see today. I doubt it will be able to achieve nearly as good results as existing LLMs. I foresee this type of research being used to silence criticisms of the ungodly amounts of energy used by LLMs to say “See, people are working on making them way more efficient! Any day now…” Meanwhile they will never come to fruition.

Yes, once you become a member you are expected to contribute to the annual fund drive.

I think RCP is the Avakian cult, isn’t that just a US thing? But they’re different from RCA.